本章的內容較為進階,詳細證明請查看古典線性迴歸進階的書籍,這裡只把觀念順一順簡單的部分証一下就帶過去囉 : )

一、複線性迴歸模型 (Multiple Linear Regression Model)

若含有更多不相關模型變量 $t_1, ..., t_q$,可如組成線性函數的形式

$y(t_1,\dots,t_q;b_0, b_1, \dots, b_q )= b_0 + b_1 t_1 + \cdots + b_q t_q$

即線性方程組

$\begin{matrix}

b_0 + b_1 t_{11} + \cdots + b_j t_{1j}+ \cdots +b_q t_{1q} = y_1\\

b_0 + b_1 t_{21} + \cdots + b_j t_{2j}+ \cdots +b_q t_{2q} = y_2\\

\vdots \\

b_0 + b_1 t_{i1} + \cdots + b_j t_{ij}+ \cdots +b_q t_{iq}= y_i\\

\vdots\\

b_0 + b_1 t_{n1} + \cdots + b_j t_{nj}+ \cdots +b_q t_{nq}= y_n

\end{matrix}$

通常人們將 $t_{ij}$ 記作數據矩陣 $A$,參數 $b_j$ 記做參數向量 $b$,觀測值 $y_i$ 記作 $Y$,則線性方程組又可寫成:

$\begin{pmatrix}

1 & t_{11} & \cdots & t_{1j} \cdots & t_{1q}\\

1 & t_{21} & \cdots & t_{2j} \cdots & t_{2q}\\

\vdots \\

1 & t_{i1} & \cdots & t_{ij} \cdots & t_{iq}\\

\vdots\\

1 & t_{n1} & \cdots & t_{nj} \cdots & t_{nq}

\end{pmatrix}

\cdot

\begin{pmatrix}

b_0\\

b_1\\

b_2\\

\vdots \\

b_j\\

\vdots\\

b_q

\end{pmatrix}

=

\begin{pmatrix}

y_1\\

y_2\\

\vdots \\

y_i\\

\vdots\\

y_n

\end{pmatrix}$

即 $Ab = Y$ ,上述方程運用最小平方法導出為線性平方差計算的形式為:$\min_b\|Ab-Y\|_2$。

下面列了一些複線性迴歸的結論和證明 (想看完整的證明請點這裡):

1. Least squares estimator for β : $\hat\beta = (X'X)^{-1}X'y \, $

$S(b) = (y-Xb)'(y-Xb) \,$

(sum of squared residuals)

$0 = \frac{dS}{db'}(\hat\beta) = \frac{d}{db'}\bigg(y'y - b'X'y - y'Xb + b'X'Xb\bigg)\bigg|_{b=\hat\beta} = -2X'y + 2X'X\hat\beta$

(differentiating it with respect to b)

$\hat\beta = (X'X)^{-1}X'y \, $

( X has full column rank, and therefore X'X is invertible )

2. Expected value for $\hat\beta$ : $\beta$ (Unbiasedness)

$\begin{align}\operatorname{E}[\,\hat\beta] &= \operatorname{E}\Big[(X'X)^{-1}X'(X\beta+\varepsilon)\Big] \\ &= \beta + \operatorname{E}\Big[(X'X)^{-1}X'\varepsilon\Big] \\ &= \beta + \operatorname{E}\Big[\operatorname{E}\Big[(X'X)^{-1}X'\varepsilon|X \Big]\Big] \\ &= \beta + \operatorname{E}\Big[(X'X)^{-1}X'\operatorname{E}[\varepsilon|X]\Big] &= \beta,\\ \end{align} $

3. Covariance matrix of $\varepsilon$ : $\sigma^2 (X'X)^{-1}$, $\hat\sigma^2 = \frac{n-p}{n} \sigma^2$

$\begin{align} \operatorname{E}[\,(\hat\beta - \beta)(\hat\beta - \beta)^T] &= \operatorname{E}\Big[ ((X'X)^{-1}X'\varepsilon)((X'X)^{-1}X'\varepsilon)^T \Big] \\ &= \operatorname{E}\Big[ (X'X)^{-1}X'\varepsilon\varepsilon'X(X'X)^{-1} \Big] \\ &= \operatorname{E}\Big[ (X'X)^{-1}X'\sigma^2X(X'X)^{-1} \Big] \\ &= \operatorname{E}\Big[ \sigma^2(X'X)^{-1}X'X(X'X)^{-1} \Big] \\ &= \sigma^2 (X'X)^{-1}, \\ \end{align}$

By properties of a projection matrix, it has p = rank(X) eigenvalues equal to 1, and all other eigenvalues are equal to 0. Trace of a matrix is equal to the sum of its characteristic values, thus tr(P)=p, and tr(M) = n − p. Therefore

$\operatorname{E}\,\hat\sigma^2 = \frac{n-p}{n} \sigma^2$

二、多重檢定 : F 檢定、限制迴歸

1. t檢驗與F檢驗的關係

- t檢驗 : 是指在兩個處理之間,平均數之差與均數差數標準差的比值,它一般用於兩處理,其目的是推翻或肯定假設前提兩處理的分別的總體平均數相等。

- F檢驗 : 是一種一尾檢驗,目的在於推斷處理間差異,主要用於變異數分析,但是只能說明有差異具體還要有兩兩比較,兩兩比較中LSD法使用的就是T檢驗。

[注意] t分布與F分布的關係

- t‐distribution is just a special case of the more general F‐distribution. The square of a t‐distribution with T‐k degrees of freedom will be identical to an F‐distribution with (1,T‐k) degrees of freedom.

- But remember that if we use a 5% size of test, we will look up a 5% value for the F‐distribution because the test is 2‐sided even though we only look in one tail of the distribution. We look up a 2.5% value for the t‐distribution since the test is 2‐tailed.

2. 限制迴歸

The restricted regression is the one in which the coefficients are restricted, i.e. the restrictions are imposed on some $\beta$.

In general, this previous information on the coefficients can be expressed as follows:

$\displaystyle R\beta=r$

The test statistic for restricted regression : $test\ statistic = \frac{RRSS - URSS}{URSS} \times \frac{T - k}{m}$

cannot test using this framework hypotheses which are not linear : $H_0 : \beta_2 \beta_3 = 2$

三、線性迴歸模式的適配度 (goodness of fit)

1. 決定係數 $R^2$ (Coefficient of determination)

R是複相關係數,R^2稱為多元決定係數(multiple determination coefficient),是總變異中可被迴歸模式解釋的百分比,用以判斷一組自變項可以聯合預測依變項之變異的程度(百分比),反應了以自變數(X)去預測依變數(Y)時的預測力,即Y變項的總變異中可被自變項所解釋的比率,因此可以反應由自變項與依變項所形成的線性迴歸模式的配適度(goodness of fit)。

$SS_{\rm res}+SS_{\rm reg}=SS_{\rm tot}. \,$

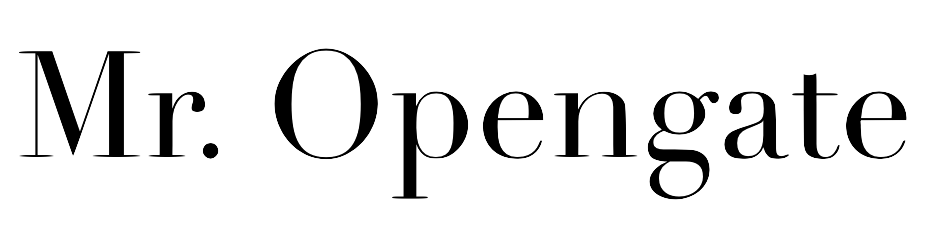

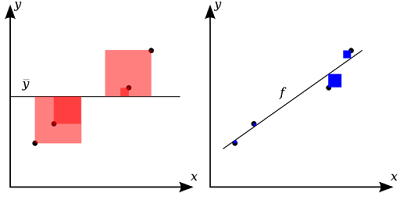

- The total sum of squares : $SS_\text{tot}=\sum_i (y_i-\bar{y})^2,$

- The regression sum of squares : $SS_\text{reg}=\sum_i (f_i -\bar{y})^2,$

- The residual sum of squares : $SS_\text{res}=\sum_i (y_i - f_i)^2\,$

$R^2 = 1 - \frac{\color{blue}{SS_\text{res}}}{\color{red}{SS_\text{tot}}}$

R = 0 和 R = 1 的範例:

[用心去感覺] $R^2$ 的意義

- $R^2$ 具有降低誤差比例(proportioned reduction in error; PRE) 的意涵。

- $R^2$ 是一個敘述性的衡量值,它本身並不能衡量迴歸模型的品質,迴歸模式可用與否仍由p值來決定。

- $R^2$ 的難題是若加入越來越多的變數,會變的很大,即使這些加入的變數在理論上不具任何適當性。

2. 校正決定係數 $\bar R^2$

線性回歸當你加入新的 $x_i$,不論這些新的 $x_i$ 跟你的 Y 有沒有關係 $R_2$ 都會上升或比較接近 1 。

但不斷加入新的 X 卻會減少模型的自由度(degree of freedom, df) ,而adjusted $R^2$則會考慮df ,所以adjusted $R^2$可以為正或負數 不過一般都會少於或等於 $R^2$,只有在你加入重要的$x_i$, adjusted $R^2$才會上升。

一般統計套裝軟體在顯示R 係數時,會包含 R(相關係數)、R平方、和調整後的 R平方 (adjusted R Square)。

$\bar R^2 = {1-(1-R^{2}){n-1 \over n-p-1}} = {R^{2}-(1-R^{2}){p \over n-p-1}}$

$\bar R^2 = {1-{SS_\text{res}/df_e \over SS_\text{tot}/df_t}}$

- 優點

- 當變數增加時 $R^2$ 並不會一直上升。

- 缺點

- 失去原有的解釋,即 $R^2$ 不再是被解釋的變異百分比。

- 此修正後的 $R^2$ 有時會被誤用為選擇一組適當的解釋變數之方法。

- 若模型未包含截距項,則衡量的 $R^2$ 就不適合了。

References

Restricted Least Squares and Restricted Maximum Likelihood Estimators

http://fedc.wiwi.hu-berlin.de/fedc_homepage/xplore/tutorials/xegbohtmlnode18.html

迴歸分析

http://www.gotop.com.tw/epaper/e0719/AEM000900n.pdf

沒有留言:

張貼留言